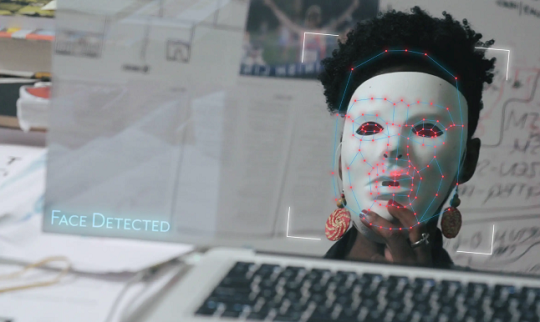

Bias and fairness in AI

As AI algorithms are often trained to replicate decisions otherwise made by humans, they also tend to replicate human biases. Taking healthcare AI as a running example, we will first give a high level introduction to AI and why it is susceptible to bias. Through examples, we will illustrate how bias enters AI algorithms and discuss whether, and what, we can do to compensate for this from an algorithmic point of view.

Following the technical discussion, we will move to a philosophical view on bias, fairness and ethics in AI. In this section of the presentation we outline some of the ethical challenges relating to the deployment of AI systems as decision-support in clinical practice: Explainability, responsibility, and bias. Thus we consider what it takes for an output to be explainable in the sense relevant for trustworthiness and patient-centered health care, whether the black box nature of AI systems threatens to introduce a responsibility gap undermining the moral responsibility of health care professionals using the system, and we consider ways in which biased algorithms generates ethical concerns about the use of AI in clinical decision-making.

Gratis for medlemmer af IDA IT og DANSK IT